The growing misuse of artificial intelligence in schools has once again come under intense scrutiny after a disturbing case in Lancaster, Pennsylvania, where teenage students used AI tools to create fake, explicit images of their classmates. What began as a hidden digital act quickly escalated into a national conversation about ethics, law, and the emotional cost of emerging technologies.

In a world where artificial intelligence is advancing faster than regulations can keep up, this case has become a sobering reminder that innovation without responsibility can carry serious consequences, especially for young people navigating digital spaces.

Table of Contents

Inside the Lancaster AI Deepfake Case

The incident centres on two 14-year-old boys who used artificial intelligence software to generate hundreds of manipulated images of their female classmates. According to court proceedings, the images were created using ordinary photos sourced from social media and school materials, then altered to appear explicit without the victims’ consent.

Investigators revealed that at least 59 girls were affected, with around 350 fake images produced and circulated among students. The scale alone shocked parents, educators, and legal experts, but the emotional toll on victims proved even more troubling.

Some of the affected students described experiencing anxiety, loss of trust, and academic difficulties after discovering the images. These are not abstract harms. They are deeply personal consequences that can shape a young person’s sense of safety and identity.

In court, the judge noted the seriousness of the offence, stating that if the offenders were adults, the outcome would likely have involved prison time. Instead, the boys were sentenced to probation, ordered to complete community service, and instructed to avoid any contact with the victims.

While the legal outcome may appear lenient to some observers, it reflects the complexities of dealing with minors in a rapidly evolving digital crime landscape.

The Human Cost of Synthetic Abuse

At its core, this case is not just about technology. It is about people. It is about the psychological and social damage caused when digital tools are used to exploit and humiliate others.

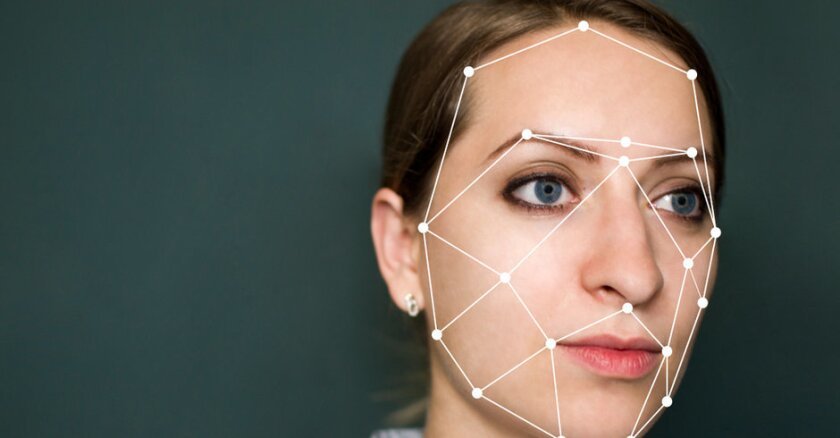

Deepfakes, which are AI-generated images, videos, or audio designed to look real, have become increasingly sophisticated. What once required advanced technical skills can now be done with widely available apps and platforms, lowering the barrier for misuse.

For victims, the harm goes beyond embarrassment. There is a lasting sense of violation. Even when the images are fake, the impact is real. Victims often struggle with fear, stigma, and the feeling that their identity has been taken out of their control.

In schools, where trust and peer relationships are essential, such incidents can disrupt entire communities. Students begin to question not just each other, but the digital environment they inhabit daily.

This is particularly concerning in an age where young people are already deeply immersed in social media. The blending of AI tools with online culture has created a situation where harmful content can spread quickly, often before adults even realise what is happening.

Legal Systems Struggling to Catch Up

One of the biggest challenges highlighted by the Lancaster case is the gap between technological capability and legal preparedness.

Although laws are beginning to emerge, many jurisdictions are still playing catch-up. In the United States, recent legislation such as the Take It Down Act aims to criminalise and remove non-consensual AI-generated explicit content. However, enforcement remains uneven, and awareness is still limited.

Globally, governments are grappling with how to define and regulate deepfake abuse. Is it harassment, exploitation, or something entirely new? In many cases, it is all of the above.

Experts argue that existing laws on bullying and harassment are not sufficient to address the unique nature of AI-generated content. Unlike traditional forms of abuse, deepfakes can be created at scale, shared anonymously, and remain online indefinitely.

There is also the question of accountability. Should responsibility lie with the individuals who create the content, the platforms that host it, or the developers who build the tools?

These are complex legal and ethical questions, and there are no easy answers.

A Warning Signal for Schools and Society

Beyond the courtroom, the Lancaster incident serves as a warning for schools, parents, and policymakers worldwide.

Artificial intelligence is not inherently harmful. In fact, it offers enormous potential for education, healthcare, and innovation. But like any powerful tool, it can be misused when proper safeguards are not in place.

Educators are now being urged to take a more proactive role in digital literacy. Students need to understand not just how to use technology, but the consequences of abusing it. Awareness campaigns, stricter school policies, and better reporting systems are becoming essential.

At the same time, parents are being encouraged to engage more actively with their children’s digital lives. The assumption that young people naturally understand technology can be misleading. While they may know how to use apps, they may not fully grasp the ethical implications.

Technology companies also have a critical role to play. As developers of AI tools, they are in a position to build safeguards that prevent misuse. This could include watermarking systems, stricter access controls, and rapid response mechanisms for harmful content.

Ultimately, the goal is not to restrict innovation, but to ensure that it develops in a way that protects human dignity and safety.

The Lancaster case may have occurred in the United States, but its implications are global. From Nigeria to Europe, the same technologies are accessible, and the same risks exist.

As artificial intelligence continues to evolve, societies must decide how to balance progress with responsibility. The choices made today will shape not just the future of technology, but the kind of world young people will grow up in.

For now, one thing is clear. The conversation around AI, ethics, and accountability is no longer optional. It is urgent.

Join Our Social Media Channels:

WhatsApp: NaijaEyes

Facebook: NaijaEyes

Twitter: NaijaEyes

Instagram: NaijaEyes

TikTok: NaijaEyes